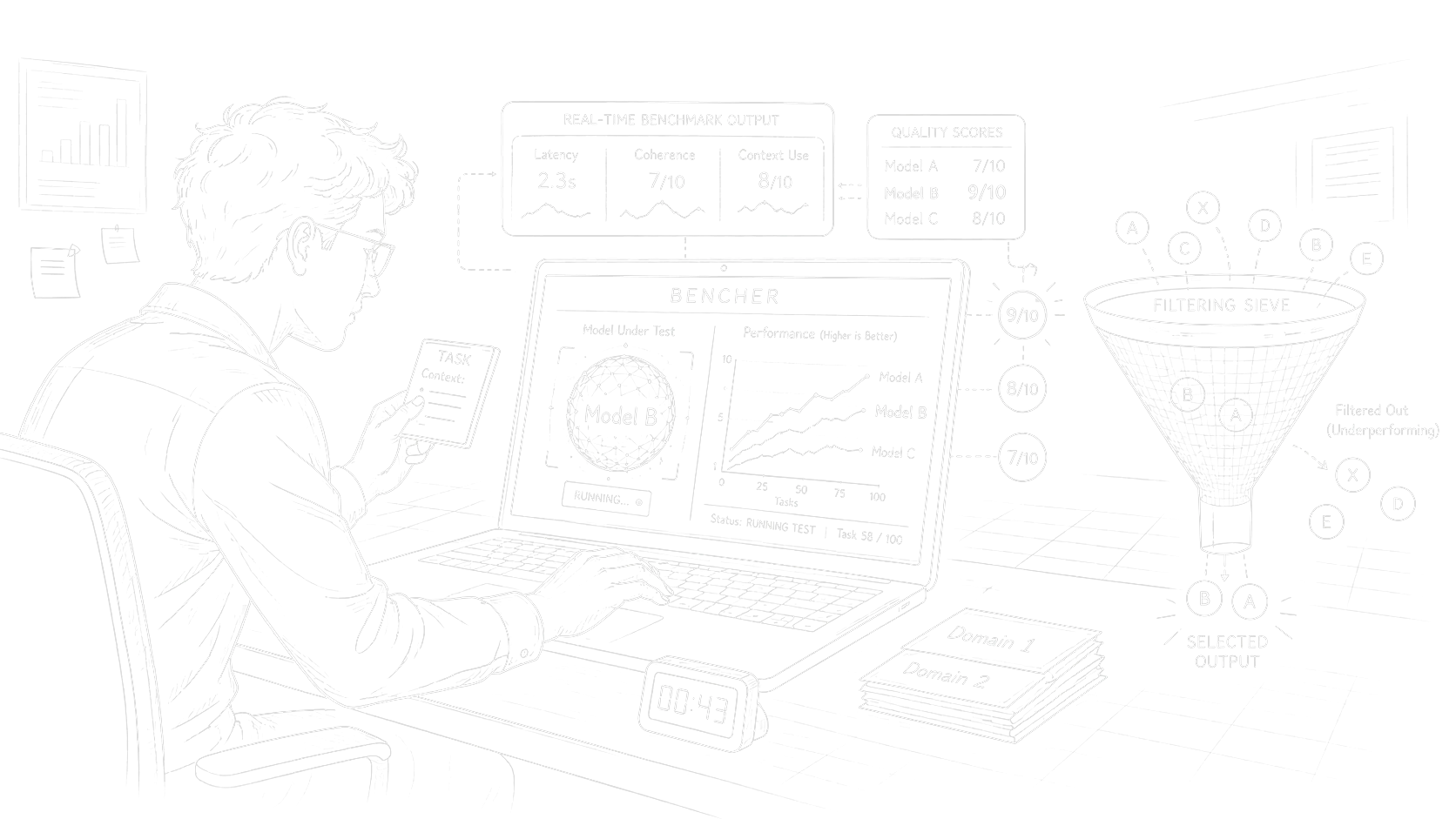

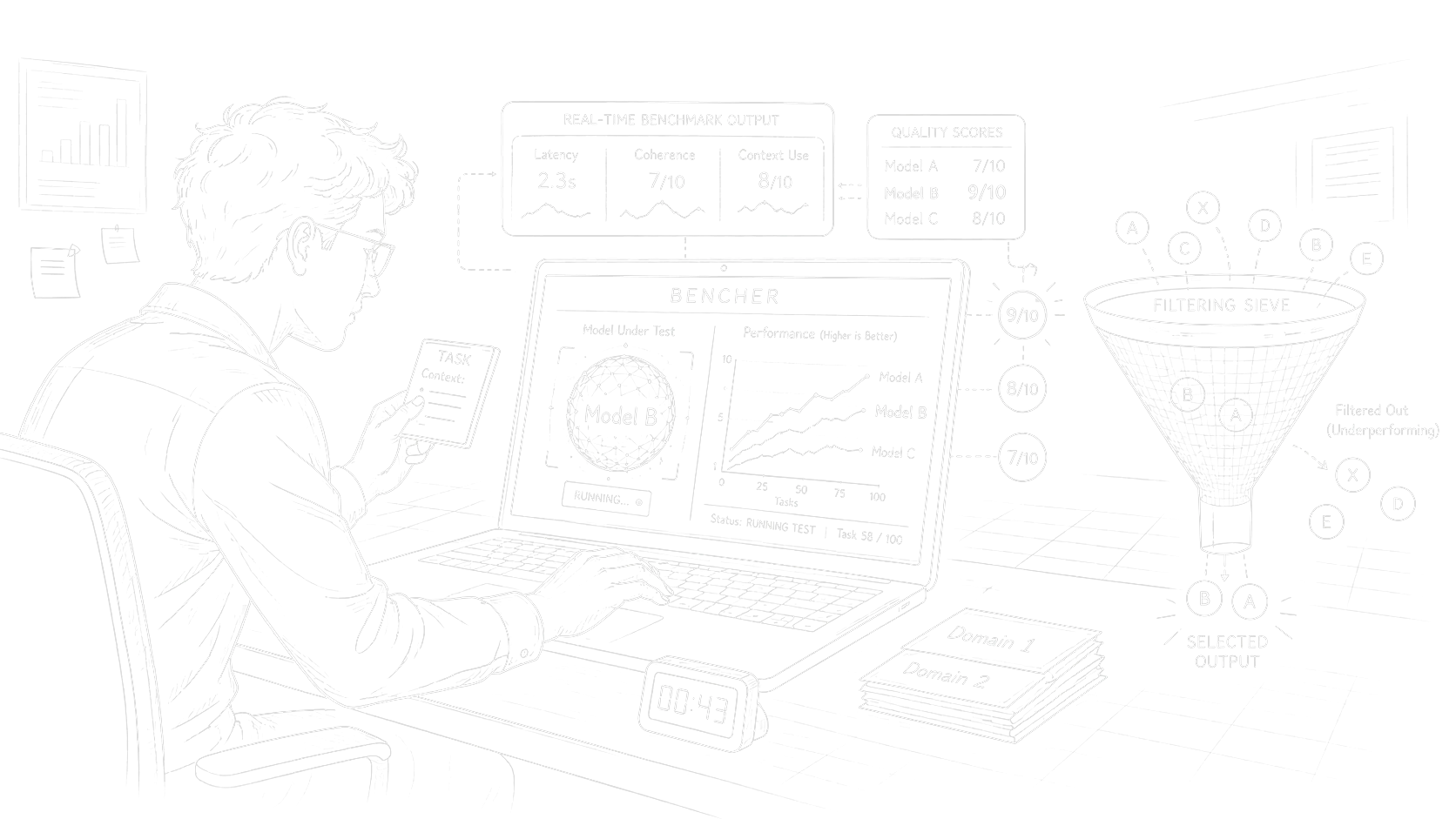

Benchmark Harness / Bencher

An open-source benchmark for real orchestrator work: tool calls, chart building, domain fixtures, prompt quality levels, and runtime plus LLM judges.

Python package - Published Apr 2026

The Lab is where Factory tools become public: benchmarks, profiling SDKs, and small infrastructure pieces that first proved useful in our own AI systems.

An open-source benchmark for real orchestrator work: tool calls, chart building, domain fixtures, prompt quality levels, and runtime plus LLM judges.

Python package - Published Apr 2026

An open-source Python SDK for evidence-based user modeling in LLM apps. It turns chat history into structured profiles with evidence, grades, confidence, and follow-up questions.

Python SDK - Active development

Working stack

The runtime around the model: what it sees, what it can call, how it stays cheap, and how it streams back.

How agent steps become runnable graphs: routing, retries, parallelism, and durable execution for work that can't be re-run for free.

Traces, prompt versions, datasets, costs, evals, releases - the operating loop that lets us change a prompt without breaking a product.

Provider and local models picked by task shape - tool reliability, latency, context length, cost, and a path to fine-tuning when the domain needs it.

The Factory ships for its own practice first, publishes what becomes useful, and helps outside teams when the problem fits our craft.