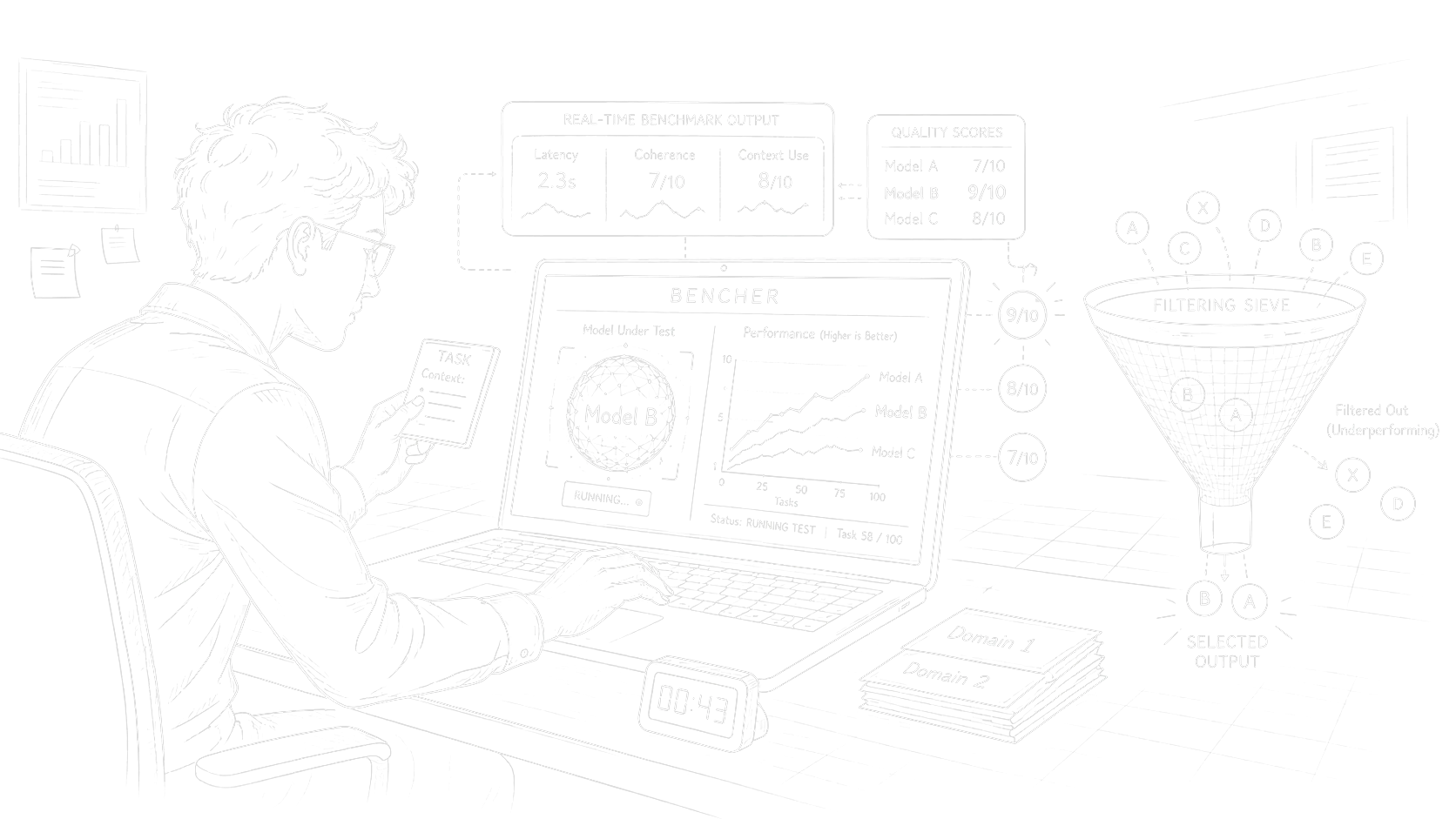

Bencher MVP

Bencher is an open-source Python benchmark for model checks that look like production work instead of leaderboard puzzles.

If you do not want to read the whole argument, give the repository to an LLM: the README has enough context.

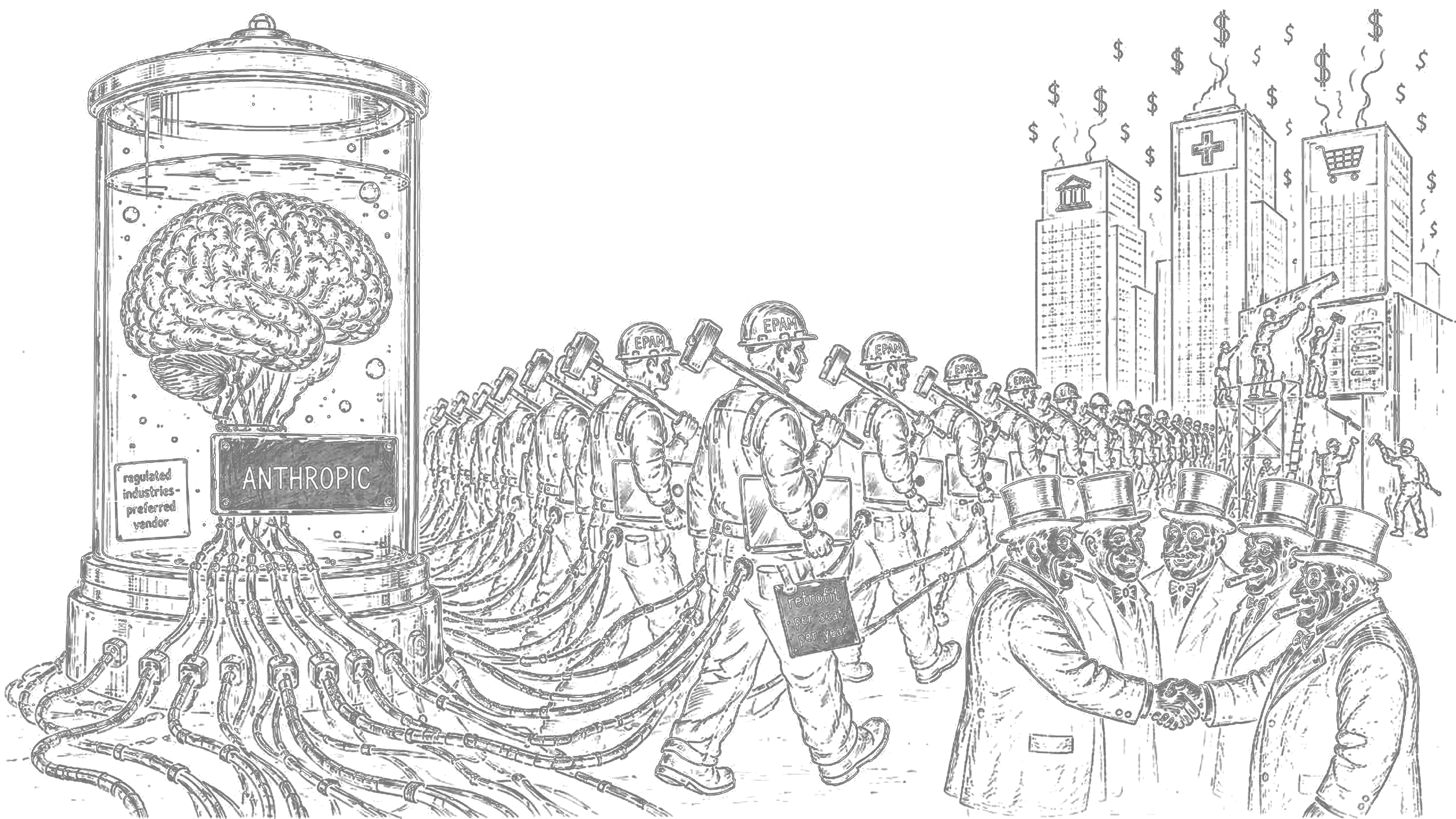

The reason for building it was simple: public benchmarks stopped matching the reality of narrow production tasks.In orchestrator work, tool calling, and other applied flows, some models that destroy public leaderboards still lose hard once the task starts looking like the system you actually run.

The problem is scope.A benchmark with broad coverage can be useful for market orientation, but it is often useless for deciding whether a model fits one concrete job.Bencher treats the benchmark as a simulation of an orchestrator step instead of a general intelligence contest.

- Tool calling: choose the right tools, pass the right parameters, and chain calls in the right order.

- Chart building: generate raw HTML/SVG that can be embedded through an iframe, then score both the code and the visual result.

- Domain fixtures: tasks spread across trading, devops, HR analytics, and API gateway scenarios.

- Prompt quality: the same task can be tested from vague prompt level 1 to precise prompt level 10.

- Difficulty scaling: trivial checks sit next to nested, high-friction cases.

- Runtime and LLM judging: automated checks handle structure and correctness; LLM judges add qualitative scoring where needed.

Bencher uses a ChatOpenAI-compatible message flow, so most OpenAI-compatible providers can be wired through the model ID, API key, and base URL.The README has the working setup notes; provider-specific details still belong in LangChain or provider docs.

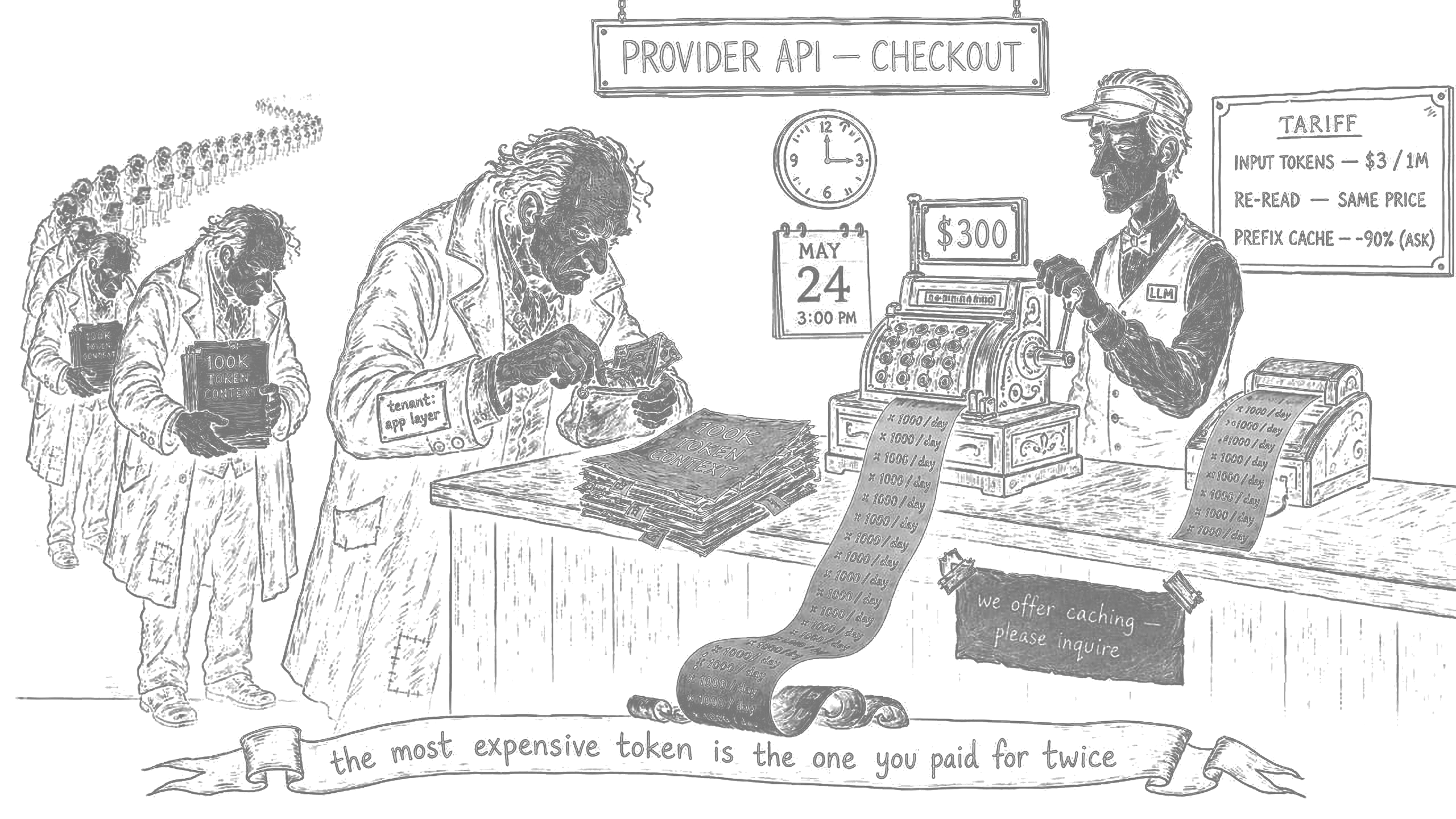

The point is not to let Bencher make the final model decision.I use it as a sieve: first remove models that are obviously wrong for the workload, then compare candidates where the price difference can be 10x, and only then test the close winners live.